Data Data Everywhere, but the right analytics needs to be there.

Big Data - The Data Deluge

In today’s world, almost every enterprise is seeing an explosion of data. They are getting huge amount of digital data generated daily. Almost every growing organization wants to automate most of its business processes and is using IT to support every conceivable Business function. This is resulting into huge amount of data being generated in the form of transactions and interactions. Web has become an important interface for interactions with suppliers and customers generating the huge amount of data in the form of emails etc. Besides this, there is a huge amount of data emitted automatically in the form of logs like network logs and web server logs.

Various Telecom Service Providers get huge amount of data in the form of conversations and Call Data Records. Various Social N/W Sites have started getting TBs of data every day in the form of tweets, blogs, comments, photos and videos etc. Facebook generates 4TBs of compressed data every day. Web Companies like these get huge amount of click stream data generated daily as well. Hospitals have data about the patients, their diseases and the data generated by various medical devices as well. Sensors used in various machines used for production keep generating so much of event data in seconds. Almost every sector like transport, finance is seeing a tsunami of Data.

Such huge amount of data needs to be stored for various reasons. Sometimes any compliance demands more historical data to be stored. Some times organizations want to store, process and analyse this data for intelligent decision making to get the competitive advantage.For example analyzing CDR data can help a service provider know their quality of service and then make the necessary improvements. A Credit Card company can analyze the customer transactions for fraud detection. Server logs can be analyzed for fault detection. Web logs can help understand the user navigation patterns. Customer emails can help understand the customer behavior, interests and some time the problems with the products as well.

Now the important question that arises at this point of time is how do we store and process such huge amount of data most of which is Semi structured or Unstructured.

Big Data Storage & Processing

Let’s see the purpose-built storage options that allow you to store and process big data in a scalable, fault tolerant and efficient manner. You know what, this has been the most innovative sector of the business intelligence industry among the database vendors, both new and old, that have shipped a number of new products in the last few years for big data storage and processing. A lot of progress has also been made at open source platforms. Here is a high-level categorization of these products.

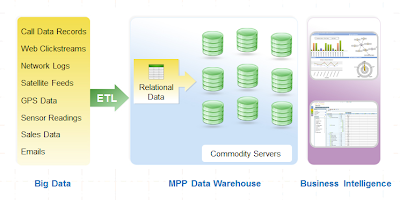

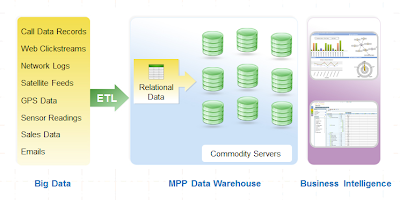

The first category includes massively parallel processing or MPP Data warehouses that are designed to store huge amount of structured data across a cluster of servers and perform parallel computations over it. Most of these solutions follow shared nothing architecture which means that every node will have a dedicated disk, memory and processor. All the nodes are connected via high speed networks. As they are designed to hold structured data so generally you would use an ETL tool to extract the structure from the data and populate these data sources with the structured data.

These MPP Data Warehouses include:

MPP Databases — these are generally the distributed systems designed to run on a cluster of commodity servers.

Examples: Aster nCluster, Greenplum, DATAllegro, IBM DB2, Kognitio WX2, Teradata etc

Appliances — a purpose-built machine with preconfigured MPP hardware and software designed for analytical processing.

Examples: Oracle Optimized Warehouse, Teradata machines, Netezza Performance Server and Sun’s Data Warehousing Appliance

Columnar Databases — they store data in columns instead of rows, allowing greater compression and faster query performance.

Examples: Sybase IQ, Vertica, InfoBright Data Warehouse, ParAccel

Most of them provide SQLs and UDFs to process the data.

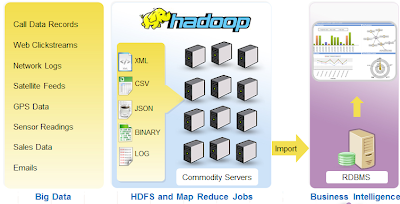

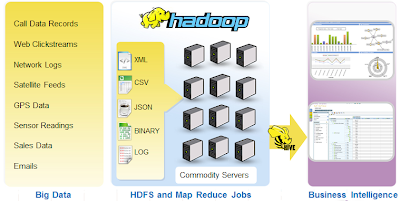

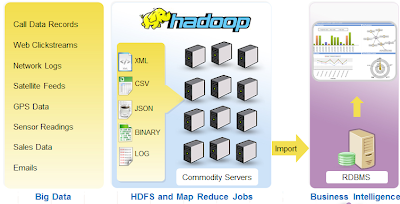

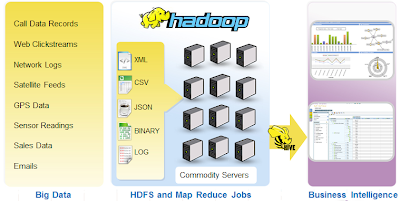

Another category includes distributed file systems like Hadoop that allow us to store huge unstructured data and perform Map Reduce computations on it over a clusters built of commodity hardware.

Real Time Deep Analytics from Unstructured data

One of the biggest challenges while dealing with Big Data Analytics is Unstructured Data. As we saw earlier, most of the big data generated is semi structured or unstructured. Structured data is inherently relational and record oriented with a defined schema which makes it easy to query and analyze. However to analyze unstructured data first you need to extract structure from it.

Now the problem is that the process of structuring the data can itself be very complex considering the huge amount of data. Sometimes the computations required to structure the data are complex say Entity extraction from Natural Language text. Sometimes the data generates at a faster pace than the ability of your ETL tool to structure it. Moreover, sometimes you don’t even know what should be the structure of the data. You know that the big unstructured data collected has got a lot of value but you don’t know where it is and so it becomes difficult to structure the data at the time of data collection and loading. Rather you want to delay the structuring of the data till you can actually understand the exact analytics needs.

Another challenge is to carry out complex computations over big data. Sometimes your analysis will include querying the data with simple summaries and statistics or multidimensional analytics over big data. However sometimes you actually want to perform complex computations to carry out deep analytics over big data. You might want your system to mine your data to extract knowledge out of it so that you are not only aware of what has happened or what is happening rather you are able to predict what will happen in the future.

Moreover you always want to keep the latency of the analytical queries as low as possible. You always want that the time required to process this huge amount of data is as low as possible. You want to reduce days into hours, hours into minutes and minutes into seconds. You almost want near real time analytics. So at one side your data is continuously increasing and at other side you want to reduce the processing time, two contradictory things as such.

Approaches for Big Data Analytics

In general for big data analytics, you will need a BI tool over one of the storage options that we discussed earlier. The BI tool will provides a visual interface to query the data and extract information and knowledge out of it so as to make intelligent decisions. Let us see the possible approaches one by one:

Direct Analytics over MPP DW

The first approach for big data analytics is using a BI tool directly over any of the MPP DW. Generally these DWs allow a BI tool to connect to them using a JDBC or ODBC interface with SQL as a mean to get the data for analytics. For any analytical request by the user the BI tool will send SQL queries to these DWs. These DWs will execute the queries in a parallel manner across the cluster and return the data to BI tool for further analytics.

Some of these DWs also allow you to write Map Reduce UDFs that can be used within SQLs to perform the procedural computations over big data in a parallel manner. This is also called as in database analytics, which means that the BI tool does not need to take the data out of the DW to perform complex computation over it rather the computations can be performed in the form of UDFs inside the database.

Important point to note here is that the data needs to be structured before a BI tool can do analytics over it. Either you can use an ETL tool to extract the structure it or you load the unstructured data in a column and use in-database computations in the form of MR functions to structure it.

Generally If the data is structured then this might prove to be a good approach as an MPP database enjoys all the performance enhancement techniques of relational world like indexing and aggregations, compression, materialized views, result caching. However the cost of such a solution is at a higher end which is something worth considering.

Indirect Analytics over Hadoop

Another interesting approach that might suit you is analytics over Hadoop Data but not directly rather indirectly by first processing, transforming and structuring it inside Hadoop and then exporting the structured data into RDBMS. The BI tool will work with the RDBMS to provide the analytics.

Generally one would go for such an approach when the generated data is huge and unstructured and computations required to derive the structure out of it are complex and time consuming and also it is possible to partially process and summarize it before doing the actual analytics. In such cases the huge amount of unstructured or semi structured data can be stored in the Hadoop system. The MR jobs will take care of structuring and summarizing it which can then be easily be put into any standard RDBMS over which a BI tool can work.

Please note that if the structured and summarized data is still too big to go in an RDBMS then this RDBMS can be replaced by an MPP DW as well. If an RDBMS is used here then with a moderate cost it can provide you real time analytics over your data.

Direct Analytics over Hadoop

The last approach is performing analytics directly over Hadoop. In this case all the queries that a BI tool wants to execute against the data will be executed as MR jobs over big unstructured data placed into Hadoop. The complication with this approach is that how a BI tool connects to Hadoop system as MR jobs are the only way to process the data in Hadoop. However in the Hadoop Ecosystem the components like Hive and Pig allow one to connect to Hadoop using high level interfaces. Hive allows you to define the structured Meta layer over Hadoop. Hive supports a SQL like query language called Hive-QL. It also implements the interface like JDBC that a BI tool can easily use to connect to it. Hive is also extensible enough to allow implementing custom UDFs to work on data and SerDe classes to structure the data at run time.

Such an approach will have low cost but it is supposed to be a high latency approach for analytics over big unstructured data as one would require transforming and extracting structure out of data at run time. However the good thing is that somebody does not need to worry about the data schema and modeling till he or she is clear about the analytics need.

Opposed to other approaches, here the data is structured at read time rather than write time. So if one has big un-structured data and batch analysis can suffice his or her needs then this is a good solution. One surely enjoys the scalability and fault tolerance of Hadoop that too with a cluster of commodity servers which not necessarily need to be homogeneous.

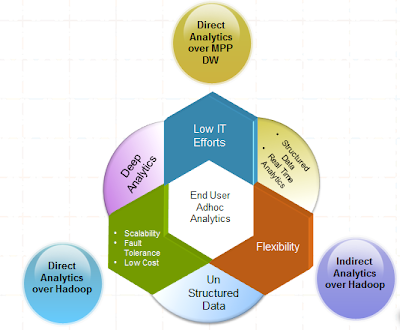

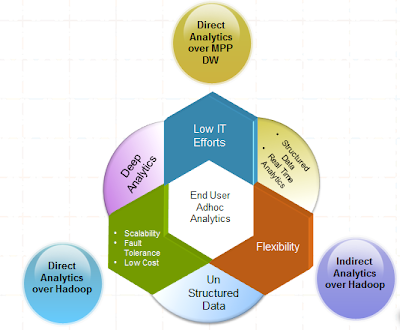

Which approach I go with?

The biggest question that that would come to everybody’s mind after reading this blog is which approach he or she should go with.

So as you can see if you want a highly scalable, fault tolerant and low cost solution that allows you to do complex analytics over unstructured data then you might opt to go with Direct Analytics over Hadoop.

If you are looking for an easy to use solution that allows you to do complex and near real time analytics over huge structured data with minimal IT efforts then you might opt to go with Direct Analytics over MPP DW.

Finally if you are looking for a solution that provides you the flexibility in terms of the structure of the data and allows you to do real time analytics over data then you might opt to go with Indirect analytics over Hadoop.

References

URLs:

http://www.asterdata.com/

http://www.cloudera.com/

http://www.impetus.com/

http://www.intellicus.com/

Slides:

http://www.scribd.com/doc/39239619/Business-Insights-From-Big-Data

http://www.slideshare.net/intellicus/business-insightsfrombigdata-5431012

Documents:

Beyond Reporting: Requirements for Large-Scale Analytics

By Wayne W. Eckerson Director, TDWI Research The Data Warehousing Institute

Author: Ankit Khandelwal - Manager, Intellicus Technologies